Building an enterprise knowledge base for near-perfect AI accuracy

The problem: AI that makes things up

Large Language Models have a critical flaw: they generate confident-sounding responses that are sometimes completely false. For businesses, this creates real risks—a support chatbot inventing refund policies, an HR assistant citing procedures that don't exist.

The solution is RAG (Retrieval-Augmented Generation): an architecture that forces your AI to consult your official documents before answering, and respond only with verified information.

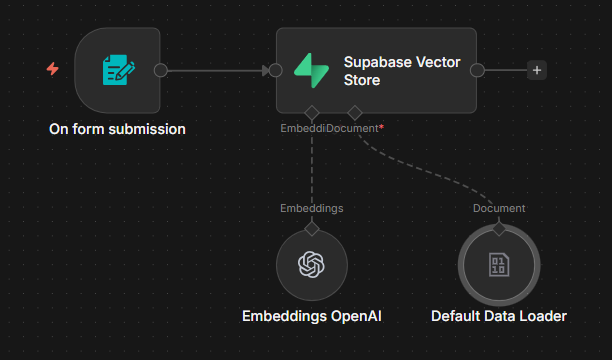

How document ingestion works

Before your AI can answer questions, your documents must be indexed in a Vector Store. Here is the n8n workflow we use:

The process is straightforward:

When a user asks a question, the system finds the most relevant segments and provides them to the AI as context—ensuring every answer is grounded in your actual documentation.

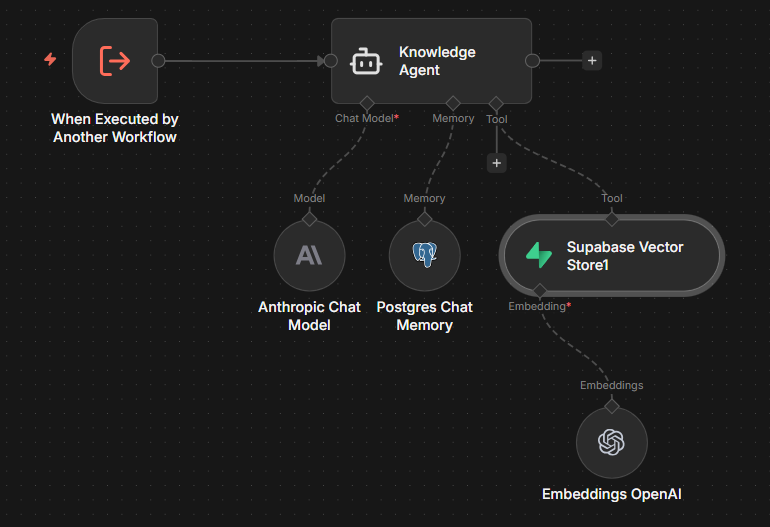

How data retrieval works

Ingestion is only half the equation. When a user queries the system, the retrieval process ensures the AI receives the right context:

This retrieval mechanism is what prevents hallucinations—the AI cannot reference information that wasn't explicitly provided in the context window.

The role of prompt engineering

RAG retrieves the right information. The system prompt ensures the AI uses it correctly:

This combination of curated data and strict instructions virtually eliminates hallucinations.

Business impact

Before RAG

- AI invents plausible-sounding answers

- No way to trace where answers come from

- Updating knowledge requires retraining

- Legal and compliance risks

After RAG

- AI responds only with verified facts

- Full auditability and source attribution

- New documents are searchable instantly

- Controlled, consistent information

Practical applications

Customer support

Chatbots that quote your actual policies, not invented ones.

Employee onboarding

New hires get accurate answers about real company procedures.

Sales enablement

Representatives access correct product specifications and pricing.

Compliance

Responses are traceable and auditable for regulatory requirements.

Getting started

The technical implementation requires a Vector Store (we recommend Supabase with pgvector), an embedding model (OpenAI), and an orchestration layer (n8n). The setup can be completed in a matter of hours, and documents can be added incrementally.

The investment is modest. The reduction in risk and improvement in response quality is substantial.